|

It provides a web scraping solution that allows you to scrape data from websites and organize them into data sets. Why you should use it: Import.io is a SaaS web data platform. Who is this for: Enterprises with budget looking for integration solutions on web data. Or you can simply follow the Octoparse user guide to scrape website data easily for free. If you are looking for a one-stop data solution, Octoparse also provides web data service. It also provides ready-to-use web scraping templates to extract data from Amazon, eBay, Twitter, BestBuy, etc. With its intuitive interface, you can scrape web data within points and clicks. Why you should use it: Octoparse is a free for life SaaS web data platform. Who is this for: Professionals without coding skills who need to scrape web data at scale. This web scraping software is widely used among online sellers, marketers, researchers and data analysts. If you have programming skills, it works best when you combine this library with Python. It is the top Python parser that has been widely used. Why you should use it: Beautiful Soup is an open-source Python library designed for web-scraping HTML and XML files. Who is this for: developers who are proficient at programming to build a web scraper/web crawler to crawl the websites. I just put them together under the umbrella of software, while they range from open-source libraries, browser extensions to desktop software and more.īest 30 Free Web Scraping Tools 1. Here is a list of the 30 most popular free web scraping software. Also, if you're a data scientist or a researcher, using a web scraper definitely raises your working effectiveness in data collection. Luckily, there is data scraping software available for people with or without programming skills.

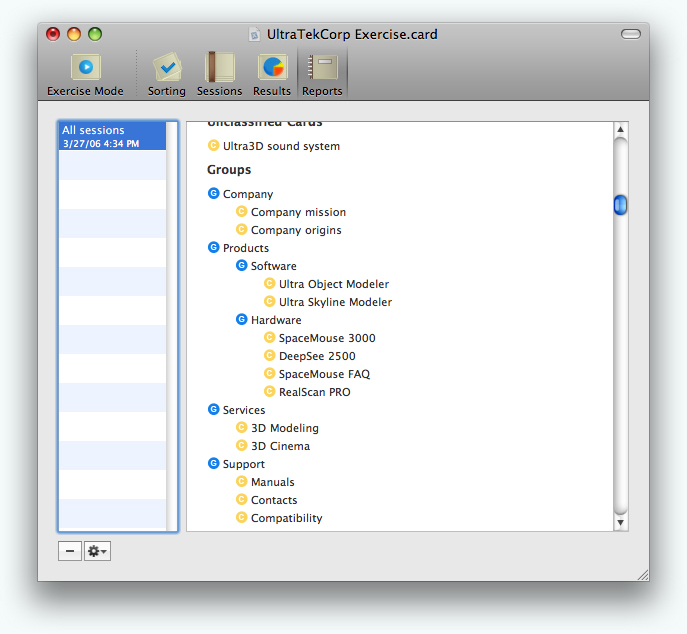

It can be difficult to build a web scraper for people who don’t know anything about coding. It turns web data scattered across pages into structured data that can be stored in your local computer in a spreadsheet or transmitted to a database. * It'd be nice if you could export the distance tables as a spreadsheet (CSV format) and print themĭefinitely worth a try.Web scraping (also termed web data extraction, screen scraping, or web harvesting) is a technique of extracting data from websites. * Control over how the reports and cluster tree is printed is *very* basic and limited * You can't print out sheets of cards so you still have the option of performing the exercise and entering the data manually. there's no way to merge data from seperate installs together. * There's no facailitation to have mulitple participants on separate computers - ie. * Exercise mode has password protection to keep participants from exiting the modeĪll that said, it does have a few things that could be improved: * Allows you to enter a "profile" of each participant which users select from when they perform the exercise and can later be filtered against

* It provides complete session management and an kiosk-like exercise interface for participants where the can move virtual cards around on a virtual table or organize via an "outline" mode We've previously been using an old IBM Windows app for analysis, but that required making the cards by hand and then entering the data manually to generate the analysis tools.Īt just a quick glance, this app looks to be an incredible tool that can finally replace the one we've been using. Wow wow wow! As one of the few web and application development companies in our area that actually use card sorting as an exercise for informational architecture, I've been searching (unsuccessfully) for something like this for a LOOONG time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed